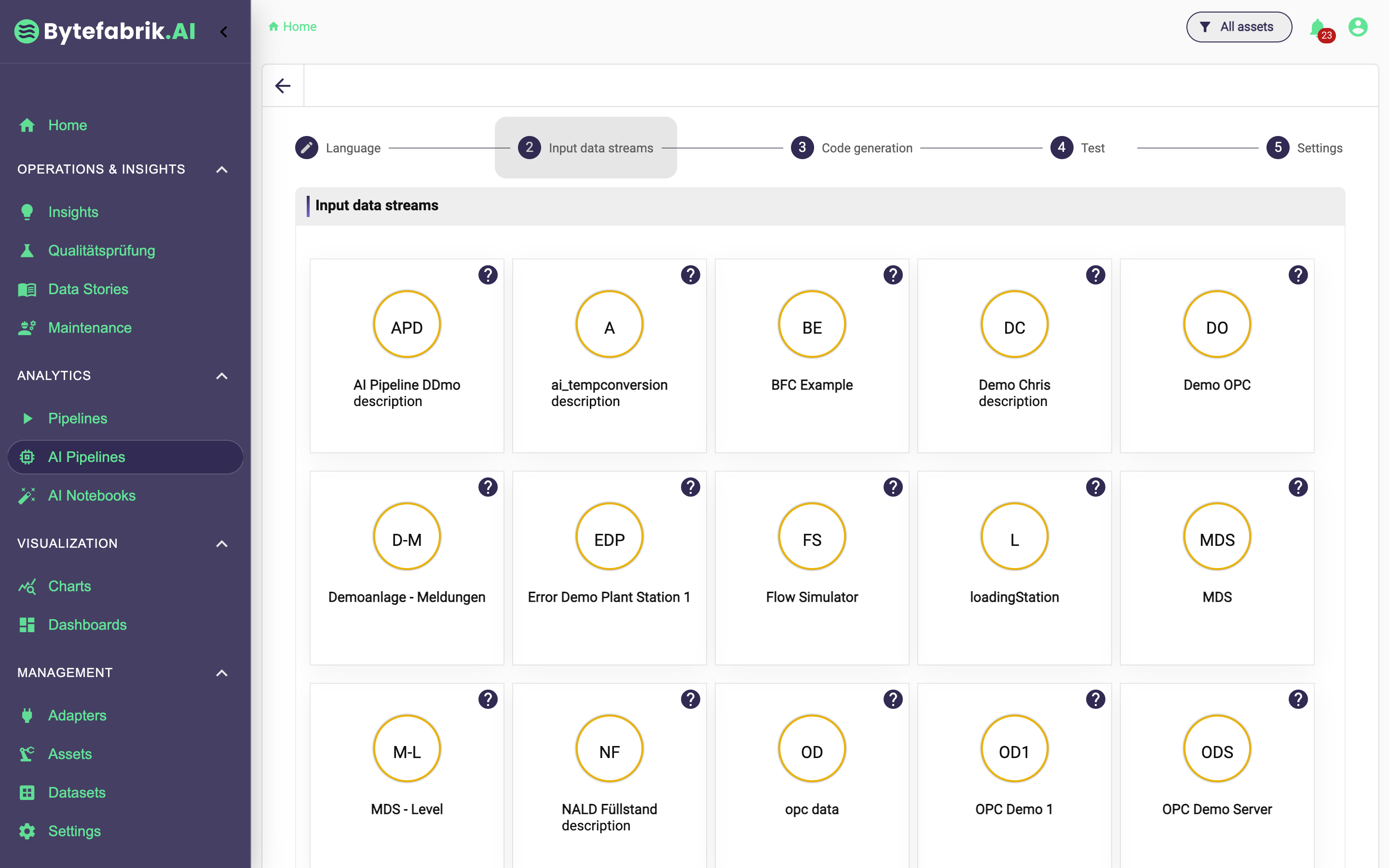

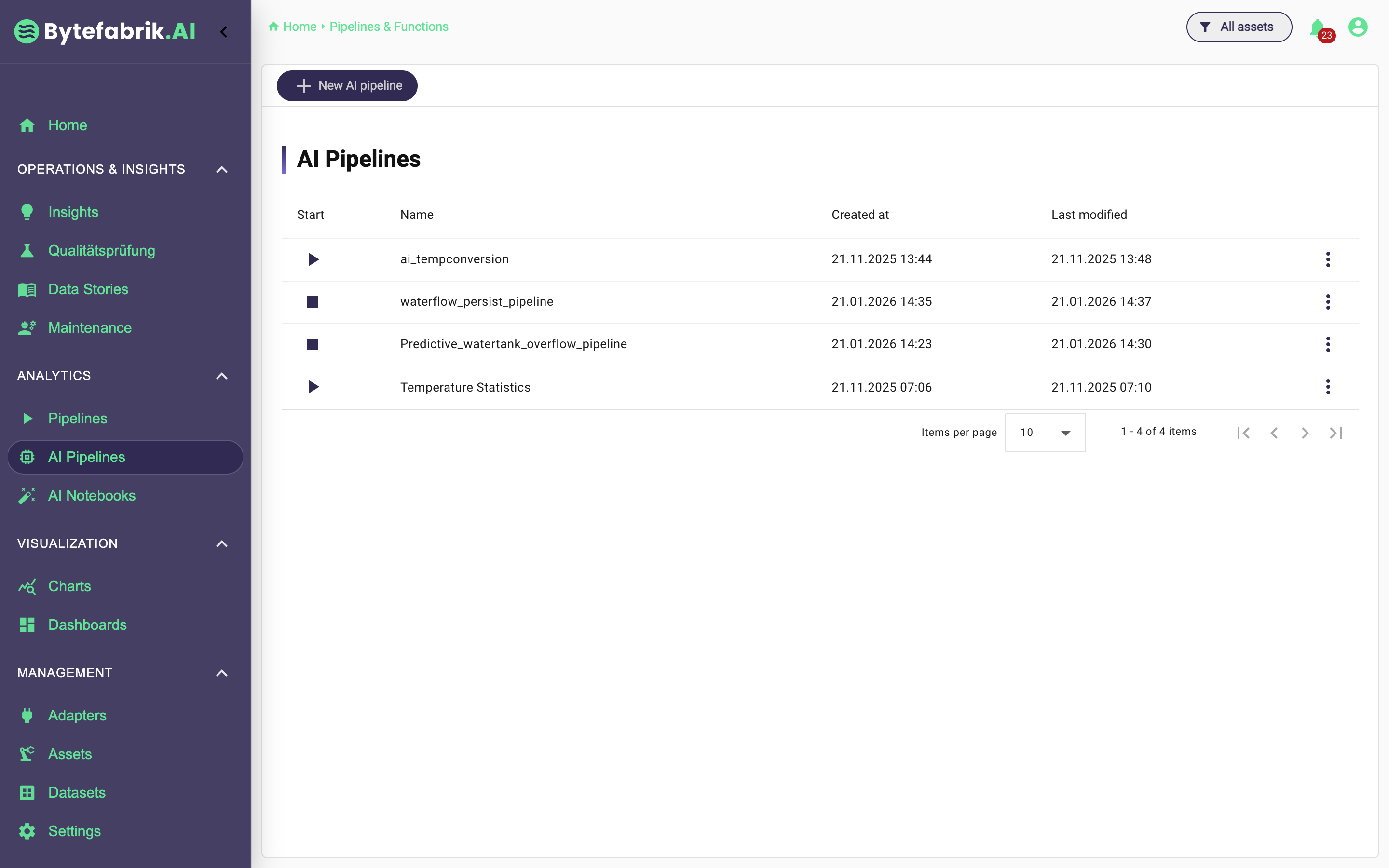

AI pipelines are designed for live data streams. They define monitoring, harmonization steps and analytical logic directly on running machine data instead of having to work with exports or individual scripts later.

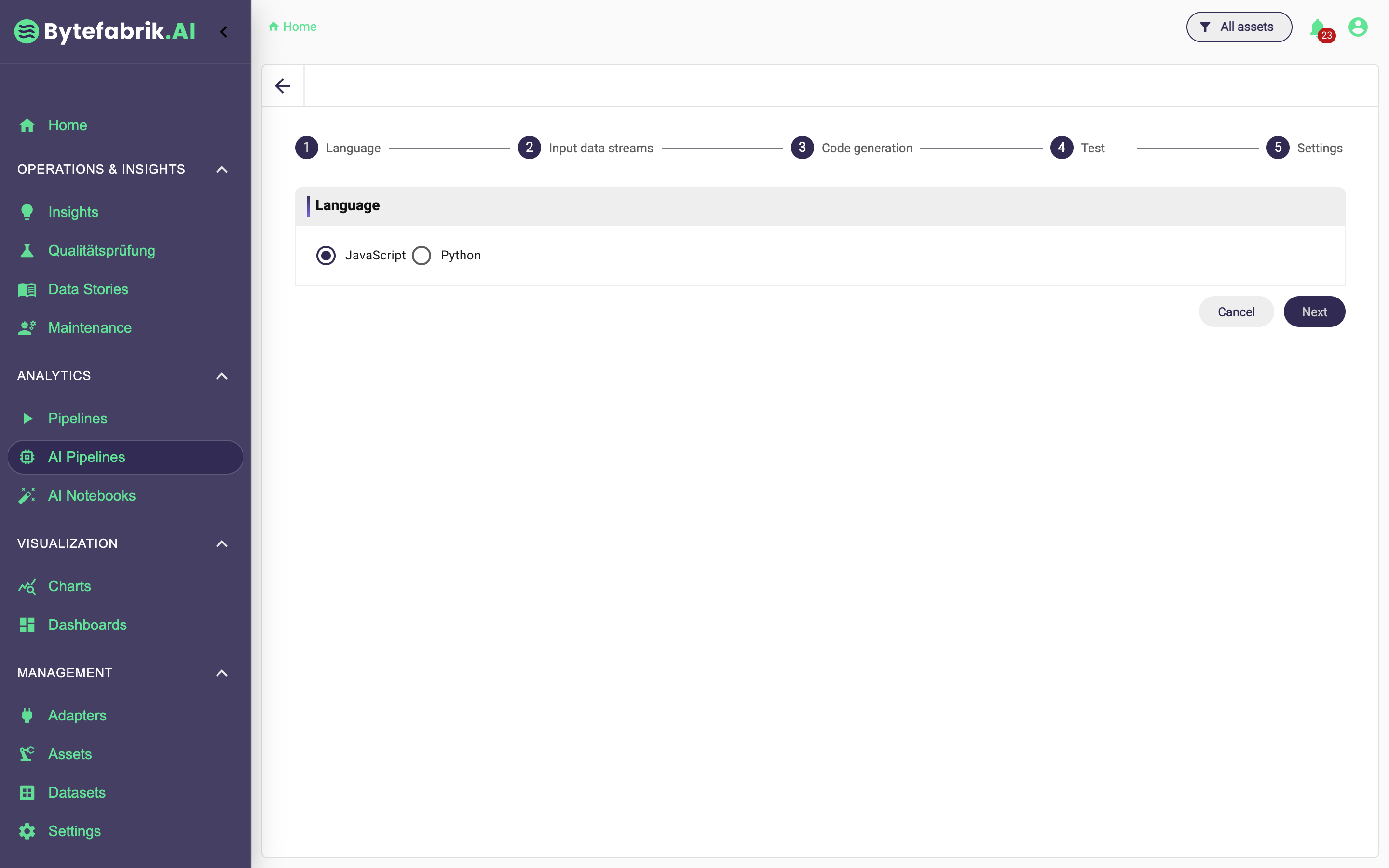

The main difference to classic pipelines is that complex logic is not just composed of reusable graphical blocks. Instead, a user can use language to describe which live analysis or harmonization is required. This is particularly helpful when graphical blocks become too rigid or too complex for the actual task.

The platform generates code from this in suitable programming languages, executes it on high-frequency data streams and makes the results available again as live data in the platform. This makes even more sophisticated evaluations much more accessible in everyday life.